Lessons from the Trenches on Reproducible Evaluation of Language Models

mai. 23, 2024·,,,,,,,,,,,,,,,,,,,,,,,,,,,,,·

0 minutuko irakurketa

Stella Biderman

Hailey Schoelkopf

Lintang Sutawika

Leo Gao

Jonathan Tow

Baber Abbasi

Alham Fikri Aji

Pawan Sasanka Ammanamanchi

Sidney Black

Jordan Clive

Anthony DiPofi

Julen Etxaniz

Benjamin Fattori

Jessica Zosa Forde

Charles Foster

Jeffrey Hsu

Mimansa Jaiswal

Wilson Y. Lee

Haonan Li

Charles Lovering

Niklas Muennighoff

Ellie Pavlick

Jason Phang

Aviya Skowron

Samson Tan

Xiangru Tang

Kevin A. Wang

Genta Indra Winata

François Yvon

Andy Zou

Laburpena

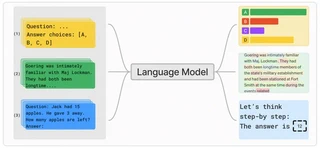

Effective evaluation of language models remains an open challenge in NLP. Researchers and engineers face methodological issues such as the sensitivity of models to evaluation setup, difficulty of proper comparisons across methods, and the lack of reproducibility and transparency. In this paper we draw on three years of experience in evaluating large language models to provide guidance and lessons for researchers. First, we provide an overview of common challenges faced in language model evaluation. Second, we delineate best practices for addressing or lessening the impact of these challenges on research. Third, we present the Language Model Evaluation Harness (lm-eval): an open source library for independent, reproducible, and extensible evaluation of language models that seeks to address these issues. We describe the features of the library as well as case studies in which the library has been used to alleviate these methodological concerns.

Mota

Argitalpena

ArXiv